1 min read

Generating Instant Avatars with HeyGen & Anthropic's New 'Computer Use Feature [Sidecar Sync Episode 53]

Timestamps:

4 min read

Mallory Mejias

:

April 21, 2026

Mallory Mejias

:

April 21, 2026

Most AI conversations in associations start with the same question: which model should we use? Claude or ChatGPT? Gemini or something open-source? The question feels reasonable, but maybe it's the wrong place to begin.

The associations getting the most out of AI right now aren't choosing between models. They're layering several of them together. A typical well-designed AI workflow might touch four or five different models before it produces an answer — each one doing the work it's best suited for.

The real question isn't which model. It's how to design the system.

Think about how a complex project moves through your organization. Say you're redesigning the annual meeting. The work doesn't happen in one seat. It moves through stages, and different stages call for different kinds of thinking.

There's the strategy work — figuring out what the meeting should accomplish, what the program structure should look like, what bets you want to make. That's thoughtful, context-heavy work. It benefits from stepping back and seeing the whole picture.

Then there's the execution work. Once the plan is set, it gets broken into dozens of smaller tasks. Writing session descriptions. Coordinating with speakers. Building out the registration site. Each task is clearer in scope, more focused, and benefits from speed and follow-through rather than deep strategic context.

And then there's the review pass — looking at the finished pieces with the strategic plan back in mind, making sure everything holds together before it goes out the door.

Different work, different fit. Pulling someone off strategy work to type up session abstracts would slow everything down. Handing someone a list of vague strategic priorities with no context would set them up to struggle. The work moves well when the right kind of effort lands in the right place.

Good AI systems are designed on the same principle.

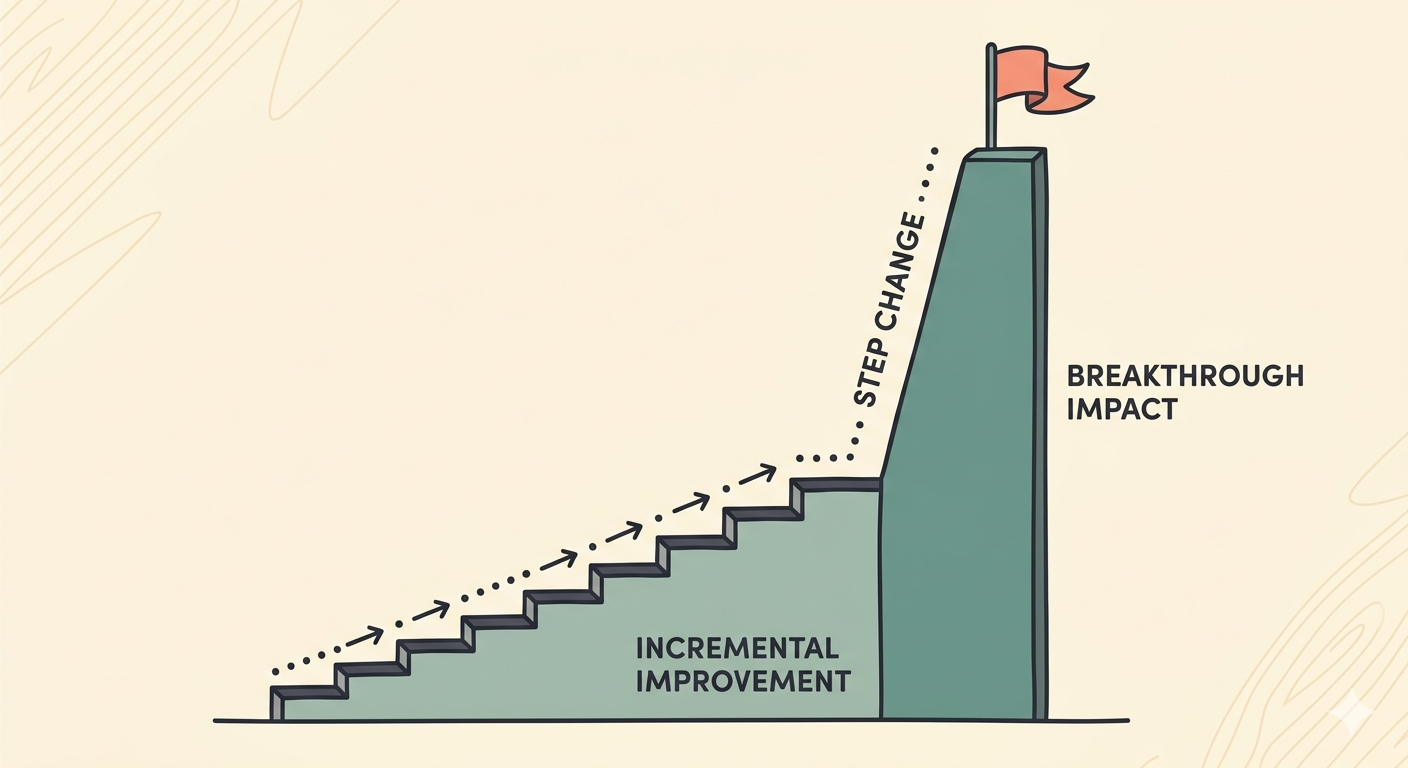

The most capable models — the big, expensive ones at the top of the intelligence curve — are built for the strategy work. Planning a complex analysis. Synthesizing findings from many sources. Reviewing finished output against the original goal. Tasks that require holding a lot of context and making nuanced judgment calls.

The smaller models — the fast, cheap ones — are built for volume. Processing thousands of documents. Summarizing individual chunks of content. Extracting specific fields. Classifying records against a known set of categories. Each one of these tasks is well-scoped, and a smaller model handles them quickly and at a fraction of the cost.

A typical workflow might look like this: a top-tier model on the front end to plan the work and structure the approach. Three or four smaller models running in parallel to do the bulk of the execution. A top-tier model at the end to review the pieces and package the final output.

Five models in the workflow. Each doing what it's best at.

When the architecture matches the work, three things happen at once.

Cost on the execution-heavy parts drops by a factor of 100 or more. That isn't an exaggeration — frontier models can run twenty to fifty times the price per token of the smaller models that handle routine tasks well. If the bulk of the work is execution, routing it through a frontier model means paying a premium for capability the task doesn't require.

Total response time drops too, because smaller models run faster. A research report that used to take forty seconds end-to-end can run in eight.

Quality often improves. The savings from right-sizing the work buy back room for more review loops, more parallel runs, and more careful synthesis — all of which tend to produce better final output than a single pass through a bigger model.

Put the economics concretely. When a research report costs $5 to generate, you run it carefully and sparingly. When the same report costs five cents, you run a hundred of them. One per chapter. One per member segment. One per product line. The drop in unit cost doesn't just save money; it opens up entirely new categories of work.

Take a question like: why did members renew at higher rates this year than last?

The honest answer is probably hiding across several data sources. Email engagement. Community activity. Survey responses. Event attendance. Support tickets. Maybe external industry context. No single system has the full picture, and no single dataset tells the whole story.

A well-designed AI workflow for this kind of question runs in layers.

The top-tier model starts by forming a working hypothesis. What are we actually trying to answer? What evidence would tell us? How should the analysis be structured so the answer is useful?

Smaller models then run in parallel across the data sources. One reads through email engagement patterns. One summarizes themes from community posts. One analyzes open-ended survey responses. One pulls attendance and participation data. Each is doing focused, well-scoped work at high volume.

The top-tier model returns at the end to pull everything together. It looks at what each smaller model found, cross-references it against the original hypothesis, and produces a coherent report with the findings ranked by strength of evidence.

The middle layer of work — the reading, the summarizing, the tagging, the pattern-matching across thousands of records — is where smaller models earn their keep. The beginning and end, where judgment and synthesis matter most, is where the top-tier model earns its cost.

Another pattern worth understanding, especially for anything member-facing.

Instead of one model answering a question, you have three or five answer the same question simultaneously, each working from slightly different angles or with different chunks of source material. A reviewer model then compares all the outputs and produces the strongest final answer.

This is how AI systems move from about 99.9% accuracy to something closer to 99.9999% — a gap that sounds small but matters enormously when members are on the other end. A knowledge base assistant that's right 99.9% of the time still gets one answer wrong in every thousand. If that wrong answer goes to a physician checking the recommended dosage for a procedure in one of your journals, or an engineer verifying a safety threshold from your published standards, the cost isn't just to your credibility. It's to the member's work, and sometimes to the people on the other side of that work.

This kind of parallel architecture was prohibitively expensive a year ago. It's now affordable enough to be standard practice for any high-stakes, member-facing use case.

You don't need to design the architecture yourself. That's what your technology partners and internal staff are for. But a few questions tell you quickly whether a proposed AI project is set up well:

Good answers to those questions tend to correlate with good outcomes.

Stop thinking about "AI" as one thing. Start thinking about it as a system with several parts, each doing what it's best at. The associations building this way right now are getting more done, faster, at lower cost, with fewer errors reaching members. The ones still looking for the single perfect model will keep paying more for less.

The technology is ready for this kind of thinking. The question is whether the way your organization plans around AI has caught up.

![Generating Instant Avatars with HeyGen & Anthropic's New 'Computer Use Feature [Sidecar Sync Episode 53]](https://sidecar.ai/hubfs/Imported_Blog_Media/Sidecar-Sync-Transcript-Cover-2.png)

1 min read

Timestamps:

1 min read

In late March, a misconfigured content management system at Anthropic left nearly 3,000 unpublished assets publicly accessible. Among them was a...

1 min read

More associations are building AI-powered knowledge assistants every month. The pitch is compelling: members get instant answers drawn from your...